Mixture of Experts(MOE)

不带 shared experts(纯 routed MoE)

例如Qwen3系列、GPT-oss系列

带 shared experts(Hybrid / Shared‑expert MoE)

GLM、DeepSeek-V3、LLaMA-4

Dual Chunk Attention(DCA)+ YaRN:长上下文扩展的“组合拳”

DCA(Dual Chunk Attention)是什么

是什么:把很长的序列切成多个 chunk,在 chunk 内/跨 chunk 组织注意力,让长上下文更可算、效果更稳。Qwen2 报告说得很直白:

- 如果输入长度能放进一个 chunk,DCA 的结果与原始注意力一致;

- 超过 chunk 时,DCA 能更好捕获 chunk 内与 chunk 间的相对位置信息,从而提升长上下文表现。

YaRN 是什么

是什么:一种 RoPE 的“长度外推”方法(Yet another RoPE extensioN)。它的目标是让模型在训练长度之外也能更好泛化到更长上下文,同时成本更低。

YaRN 本质上就是在 RoPE 上做“长度外推/扩窗”的一套 RoPE scaling 方案,但它不是只做一个简单的 “position scale(把位置除以 s)”,而是由两块组成:

- NTK-by-parts 插值(对 RoPE 频率/维度做“分段插值”)

- Attention scaling(对注意力 logits 做温度缩放,可用“长度缩放 trick”在 RoPE 实现层完成)

1)YaRN 的第一步:不是单纯缩放 position,而是“分维度地缩放 RoPE 的频率”

最早的 Position Interpolation (PI) 是直接把位置索引缩小,让超长序列的“位置输入”仍落在训练长度范围内,例如把位置 m 映射到

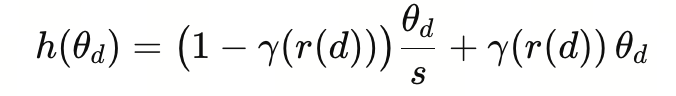

但 YaRN 用的核心是 NTK-by-parts interpolation:它不是对所有维度一刀切地缩放,而是对 RoPE 的各个频率维度 θd\theta_dθd 做“分段插值”:

- 有些维度按 PI 的比例缩放(

,等价于“位置缩小”) - 有些维度完全不缩放(保持

) - 中间用一个 ramp 函数平滑过渡

论文给出的形式是:ICLR 会议记录+1

这里

这里 是由 α,β 控制的分段/线性过渡函数(ramp),决定“哪些维度缩放、哪些维度不缩放”。

直观理解:

你可以把 RoPE 的不同维度看成不同“频率”。YaRN 不让所有频率都按同一比例压缩,而是“高频/低频分开处理”,减少长扩窗时 RoPE 高频部分带来的问题。

所以“是不是 position 的 scale?”——可以理解为“位置插值(PI)思想的一部分”,但 YaRN 更准确是:对 RoPE 的频率/维度做选择性插值,而不是纯 position 统一缩放。

2)YaRN 的第二步:Attention scaling(给注意力 logits 加温度)

YaRN 还引入了一个 attention scaling/temperature t:在 softmax 前对注意力 logits 做缩放(温度化)。论文写的是把注意力权重计算改成带 ttt 的形式。ICLR 会议记录+1

并且它指出一个很工程化的点:因为 RoPE 可重参数化成 2D 旋转矩阵,所以这个 attention scaling 可以通过一个 “length scaling trick”实现:把复数形式的 rotary embedding 乘一个常数,就等价于把 qqq 和 kkk 同比例缩放,从而实现 attention scaling,而不需要改注意力算子代码。ICLR 会议记录+1

直观理解: 扩窗后注意力分数分布会变得“太尖/太平”,温度缩放帮你把 softmax 的行为拉回到训练时更像的范围。

YaRN 的定义就是:NTK-by-parts 插值 + attention scaling 的组合。ICLR 会议记录+1

Qwen

Qwen2

- 主打 “GQA +(可选)MoE + 长上下文 DCA/YARN”

- 长上下文:Qwen2 引入 Dual Chunk Attention(DCA)+ YaRN 来扩展上下文窗口。

- 同时沿用 SwiGLU、RoPE、QKV bias、RMSNorm(pre-norm) 等。

一句话定位:Qwen2 是“比较标准的 decoder-only Transformer”上,重点做了 GQA**(省 KV)+ 长上下文(DCA/YARN),并提供 MoE分支。

Qwen3

- 延续 Qwen2.5 架构细节 + 增强训练稳定性 + MoE 旗舰

- 移除 QKV-bias(Qwen2 里用了),并引入 QK-Norm 来增强训练稳定性

一句话定位:Qwen3 是“Qwen2/2.5 路线的进化版”:继续走 GQA**(省 KV)+ RoPE/RMSNorm/SwiGLU**,同时用 QK-Norm、去 QKV-bias等细节提升大模型训练稳定性,并把 MoE 作为最高性能配置。

Qwen3-30B-A3B的实际参数配置

==== Qwen3-30B-A3B Parameter Shapes 第1/48层====

model.embed_tokens.weight | (151936, 2048)

# GQA在这里,token_dim=2048,Group=8,8/32个q-heads共用1/4对X_k和X_v

model.layers.0.self_attn.q_proj.weight | (4096, 2048)

model.layers.0.self_attn.k_proj.weight | (512, 2048)

model.layers.0.self_attn.v_proj.weight | (512, 2048)

model.layers.0.self_attn.o_proj.weight | (2048, 4096)

model.layers.0.self_attn.q_norm.weight | (128,)

model.layers.0.self_attn.k_norm.weight | (128,)

model.layers.0.mlp.gate.weight | (128, 2048)

# experts 是门控结构而非简单线性,FLOPs质量更高,乘法交互,表达能力更强

model.layers.0.mlp.experts.0.gate_proj.weight | (768, 2048)

model.layers.0.mlp.experts.0.up_proj.weight | (768, 2048)

model.layers.0.mlp.experts.0.down_proj.weight | (2048, 768)

model.layers.0.mlp.experts.1.gate_proj.weight | (768, 2048)

model.layers.0.mlp.experts.1.up_proj.weight | (768, 2048)

model.layers.0.mlp.experts.1.down_proj.weight | (2048, 768)

model.layers.0.mlp.experts.2.gate_proj.weight | (768, 2048)

model.layers.0.mlp.experts.2.up_proj.weight | (768, 2048)

model.layers.0.mlp.experts.2.down_proj.weight | (2048, 768)

model.layers.0.mlp.experts.3.gate_proj.weight | (768, 2048)

model.layers.0.mlp.experts.3.up_proj.weight | (768, 2048)

model.layers.0.mlp.experts.3.down_proj.weight | (2048, 768)

model.layers.0.mlp.experts.4.gate_proj.weight | (768, 2048)

model.layers.0.mlp.experts.4.up_proj.weight | (768, 2048)

model.layers.0.mlp.experts.4.down_proj.weight | (2048, 768)

model.layers.0.mlp.experts.5.gate_proj.weight | (768, 2048)

model.layers.0.mlp.experts.5.up_proj.weight | (768, 2048)

model.layers.0.mlp.experts.5.down_proj.weight | (2048, 768)

model.layers.0.mlp.experts.6.gate_proj.weight | (768, 2048)

model.layers.0.mlp.experts.6.up_proj.weight | (768, 2048)

model.layers.0.mlp.experts.6.down_proj.weight | (2048, 768)

model.layers.0.mlp.experts.7.gate_proj.weight | (768, 2048)

model.layers.0.mlp.experts.7.up_proj.weight | (768, 2048)

model.layers.0.mlp.experts.7.down_proj.weight | (2048, 768)

model.layers.0.mlp.experts.8.gate_proj.weight | (768, 2048)

model.layers.0.mlp.experts.8.up_proj.weight | (768, 2048)

model.layers.0.mlp.experts.8.down_proj.weight | (2048, 768)

model.layers.0.mlp.experts.9.gate_proj.weight | (768, 2048)

model.layers.0.mlp.experts.9.up_proj.weight | (768, 2048)

model.layers.0.mlp.experts.9.down_proj.weight | (2048, 768)

model.layers.0.mlp.experts.10.gate_proj.weight | (768, 2048)

model.layers.0.mlp.experts.10.up_proj.weight | (768, 2048)

model.layers.0.mlp.experts.10.down_proj.weight | (2048, 768)

model.layers.0.mlp.experts.11.gate_proj.weight | (768, 2048)

model.layers.0.mlp.experts.11.up_proj.weight | (768, 2048)

model.layers.0.mlp.experts.11.down_proj.weight | (2048, 768)

model.layers.0.mlp.experts.12.gate_proj.weight | (768, 2048)

model.layers.0.mlp.experts.12.up_proj.weight | (768, 2048)

model.layers.0.mlp.experts.12.down_proj.weight | (2048, 768)

model.layers.0.mlp.experts.13.gate_proj.weight | (768, 2048)

model.layers.0.mlp.experts.13.up_proj.weight | (768, 2048)

model.layers.0.mlp.experts.13.down_proj.weight | (2048, 768)

model.layers.0.mlp.experts.14.gate_proj.weight | (768, 2048)

model.layers.0.mlp.experts.14.up_proj.weight | (768, 2048)

model.layers.0.mlp.experts.14.down_proj.weight | (2048, 768)

model.layers.0.mlp.experts.15.gate_proj.weight | (768, 2048)

model.layers.0.mlp.experts.15.up_proj.weight | (768, 2048)

model.layers.0.mlp.experts.15.down_proj.weight | (2048, 768)

model.layers.0.mlp.experts.16.gate_proj.weight | (768, 2048)

model.layers.0.mlp.experts.16.up_proj.weight | (768, 2048)

model.layers.0.mlp.experts.16.down_proj.weight | (2048, 768)

model.layers.0.mlp.experts.17.gate_proj.weight | (768, 2048)

model.layers.0.mlp.experts.17.up_proj.weight | (768, 2048)

model.layers.0.mlp.experts.17.down_proj.weight | (2048, 768)

model.layers.0.mlp.experts.18.gate_proj.weight | (768, 2048)

model.layers.0.mlp.experts.18.up_proj.weight | (768, 2048)

model.layers.0.mlp.experts.18.down_proj.weight | (2048, 768)

model.layers.0.mlp.experts.19.gate_proj.weight | (768, 2048)

model.layers.0.mlp.experts.19.up_proj.weight | (768, 2048)

model.layers.0.mlp.experts.19.down_proj.weight | (2048, 768)

model.layers.0.mlp.experts.20.gate_proj.weight | (768, 2048)

model.layers.0.mlp.experts.20.up_proj.weight | (768, 2048)

model.layers.0.mlp.experts.20.down_proj.weight | (2048, 768)

model.layers.0.mlp.experts.21.gate_proj.weight | (768, 2048)

model.layers.0.mlp.experts.21.up_proj.weight | (768, 2048)

model.layers.0.mlp.experts.21.down_proj.weight | (2048, 768)

model.layers.0.mlp.experts.22.gate_proj.weight | (768, 2048)

model.layers.0.mlp.experts.22.up_proj.weight | (768, 2048)

model.layers.0.mlp.experts.22.down_proj.weight | (2048, 768)

model.layers.0.mlp.experts.23.gate_proj.weight | (768, 2048)

model.layers.0.mlp.experts.23.up_proj.weight | (768, 2048)

model.layers.0.mlp.experts.23.down_proj.weight | (2048, 768)

model.layers.0.mlp.experts.24.gate_proj.weight | (768, 2048)

model.layers.0.mlp.experts.24.up_proj.weight | (768, 2048)

model.layers.0.mlp.experts.24.down_proj.weight | (2048, 768)

model.layers.0.mlp.experts.25.gate_proj.weight | (768, 2048)

model.layers.0.mlp.experts.25.up_proj.weight | (768, 2048)

model.layers.0.mlp.experts.25.down_proj.weight | (2048, 768)

model.layers.0.mlp.experts.26.gate_proj.weight | (768, 2048)

model.layers.0.mlp.experts.26.up_proj.weight | (768, 2048)

model.layers.0.mlp.experts.26.down_proj.weight | (2048, 768)

model.layers.0.mlp.experts.27.gate_proj.weight | (768, 2048)

model.layers.0.mlp.experts.27.up_proj.weight | (768, 2048)

model.layers.0.mlp.experts.27.down_proj.weight | (2048, 768)

model.layers.0.mlp.experts.28.gate_proj.weight | (768, 2048)

model.layers.0.mlp.experts.28.up_proj.weight | (768, 2048)

model.layers.0.mlp.experts.28.down_proj.weight | (2048, 768)

model.layers.0.mlp.experts.29.gate_proj.weight | (768, 2048)

model.layers.0.mlp.experts.29.up_proj.weight | (768, 2048)

model.layers.0.mlp.experts.29.down_proj.weight | (2048, 768)

model.layers.0.mlp.experts.30.gate_proj.weight | (768, 2048)

model.layers.0.mlp.experts.30.up_proj.weight | (768, 2048)

model.layers.0.mlp.experts.30.down_proj.weight | (2048, 768)

model.layers.0.mlp.experts.31.gate_proj.weight | (768, 2048)

model.layers.0.mlp.experts.31.up_proj.weight | (768, 2048)

model.layers.0.mlp.experts.31.down_proj.weight | (2048, 768)

model.layers.0.mlp.experts.32.gate_proj.weight | (768, 2048)

model.layers.0.mlp.experts.32.up_proj.weight | (768, 2048)

model.layers.0.mlp.experts.32.down_proj.weight | (2048, 768)

model.layers.0.mlp.experts.33.gate_proj.weight | (768, 2048)

model.layers.0.mlp.experts.33.up_proj.weight | (768, 2048)

model.layers.0.mlp.experts.33.down_proj.weight | (2048, 768)

model.layers.0.mlp.experts.34.gate_proj.weight | (768, 2048)

model.layers.0.mlp.experts.34.up_proj.weight | (768, 2048)

model.layers.0.mlp.experts.34.down_proj.weight | (2048, 768)

model.layers.0.mlp.experts.35.gate_proj.weight | (768, 2048)

model.layers.0.mlp.experts.35.up_proj.weight | (768, 2048)

model.layers.0.mlp.experts.35.down_proj.weight | (2048, 768)

model.layers.0.mlp.experts.36.gate_proj.weight | (768, 2048)

model.layers.0.mlp.experts.36.up_proj.weight | (768, 2048)

model.layers.0.mlp.experts.36.down_proj.weight | (2048, 768)

model.layers.0.mlp.experts.37.gate_proj.weight | (768, 2048)

model.layers.0.mlp.experts.37.up_proj.weight | (768, 2048)

model.layers.0.mlp.experts.37.down_proj.weight | (2048, 768)

model.layers.0.mlp.experts.38.gate_proj.weight | (768, 2048)

model.layers.0.mlp.experts.38.up_proj.weight | (768, 2048)

model.layers.0.mlp.experts.38.down_proj.weight | (2048, 768)

model.layers.0.mlp.experts.39.gate_proj.weight | (768, 2048)

model.layers.0.mlp.experts.39.up_proj.weight | (768, 2048)

model.layers.0.mlp.experts.39.down_proj.weight | (2048, 768)

model.layers.0.mlp.experts.40.gate_proj.weight | (768, 2048)

model.layers.0.mlp.experts.40.up_proj.weight | (768, 2048)

model.layers.0.mlp.experts.40.down_proj.weight | (2048, 768)

model.layers.0.mlp.experts.41.gate_proj.weight | (768, 2048)

model.layers.0.mlp.experts.41.up_proj.weight | (768, 2048)

model.layers.0.mlp.experts.41.down_proj.weight | (2048, 768)

model.layers.0.mlp.experts.42.gate_proj.weight | (768, 2048)

model.layers.0.mlp.experts.42.up_proj.weight | (768, 2048)

model.layers.0.mlp.experts.42.down_proj.weight | (2048, 768)

model.layers.0.mlp.experts.43.gate_proj.weight | (768, 2048)

model.layers.0.mlp.experts.43.up_proj.weight | (768, 2048)

model.layers.0.mlp.experts.43.down_proj.weight | (2048, 768)

model.layers.0.mlp.experts.44.gate_proj.weight | (768, 2048)

model.layers.0.mlp.experts.44.up_proj.weight | (768, 2048)

model.layers.0.mlp.experts.44.down_proj.weight | (2048, 768)

model.layers.0.mlp.experts.45.gate_proj.weight | (768, 2048)

model.layers.0.mlp.experts.45.up_proj.weight | (768, 2048)

model.layers.0.mlp.experts.45.down_proj.weight | (2048, 768)

model.layers.0.mlp.experts.46.gate_proj.weight | (768, 2048)

model.layers.0.mlp.experts.46.up_proj.weight | (768, 2048)

model.layers.0.mlp.experts.46.down_proj.weight | (2048, 768)

model.layers.0.mlp.experts.47.gate_proj.weight | (768, 2048)

model.layers.0.mlp.experts.47.up_proj.weight | (768, 2048)

model.layers.0.mlp.experts.47.down_proj.weight | (2048, 768)

model.layers.0.mlp.experts.48.gate_proj.weight | (768, 2048)

model.layers.0.mlp.experts.48.up_proj.weight | (768, 2048)

model.layers.0.mlp.experts.48.down_proj.weight | (2048, 768)

model.layers.0.mlp.experts.49.gate_proj.weight | (768, 2048)

model.layers.0.mlp.experts.49.up_proj.weight | (768, 2048)

model.layers.0.mlp.experts.49.down_proj.weight | (2048, 768)

model.layers.0.mlp.experts.50.gate_proj.weight | (768, 2048)

model.layers.0.mlp.experts.50.up_proj.weight | (768, 2048)

model.layers.0.mlp.experts.50.down_proj.weight | (2048, 768)

model.layers.0.mlp.experts.51.gate_proj.weight | (768, 2048)

model.layers.0.mlp.experts.51.up_proj.weight | (768, 2048)

model.layers.0.mlp.experts.51.down_proj.weight | (2048, 768)

model.layers.0.mlp.experts.52.gate_proj.weight | (768, 2048)

model.layers.0.mlp.experts.52.up_proj.weight | (768, 2048)

model.layers.0.mlp.experts.52.down_proj.weight | (2048, 768)

model.layers.0.mlp.experts.53.gate_proj.weight | (768, 2048)

model.layers.0.mlp.experts.53.up_proj.weight | (768, 2048)

model.layers.0.mlp.experts.53.down_proj.weight | (2048, 768)

model.layers.0.mlp.experts.54.gate_proj.weight | (768, 2048)

model.layers.0.mlp.experts.54.up_proj.weight | (768, 2048)

model.layers.0.mlp.experts.54.down_proj.weight | (2048, 768)

model.layers.0.mlp.experts.55.gate_proj.weight | (768, 2048)

model.layers.0.mlp.experts.55.up_proj.weight | (768, 2048)

model.layers.0.mlp.experts.55.down_proj.weight | (2048, 768)

model.layers.0.mlp.experts.56.gate_proj.weight | (768, 2048)

model.layers.0.mlp.experts.56.up_proj.weight | (768, 2048)

model.layers.0.mlp.experts.56.down_proj.weight | (2048, 768)

model.layers.0.mlp.experts.57.gate_proj.weight | (768, 2048)

model.layers.0.mlp.experts.57.up_proj.weight | (768, 2048)

model.layers.0.mlp.experts.57.down_proj.weight | (2048, 768)

model.layers.0.mlp.experts.58.gate_proj.weight | (768, 2048)

model.layers.0.mlp.experts.58.up_proj.weight | (768, 2048)

model.layers.0.mlp.experts.58.down_proj.weight | (2048, 768)

model.layers.0.mlp.experts.59.gate_proj.weight | (768, 2048)

model.layers.0.mlp.experts.59.up_proj.weight | (768, 2048)

model.layers.0.mlp.experts.59.down_proj.weight | (2048, 768)

model.layers.0.mlp.experts.60.gate_proj.weight | (768, 2048)

model.layers.0.mlp.experts.60.up_proj.weight | (768, 2048)

model.layers.0.mlp.experts.60.down_proj.weight | (2048, 768)

model.layers.0.mlp.experts.61.gate_proj.weight | (768, 2048)

model.layers.0.mlp.experts.61.up_proj.weight | (768, 2048)

model.layers.0.mlp.experts.61.down_proj.weight | (2048, 768)

model.layers.0.mlp.experts.62.gate_proj.weight | (768, 2048)

model.layers.0.mlp.experts.62.up_proj.weight | (768, 2048)

model.layers.0.mlp.experts.62.down_proj.weight | (2048, 768)

model.layers.0.mlp.experts.63.gate_proj.weight | (768, 2048)

model.layers.0.mlp.experts.63.up_proj.weight | (768, 2048)

model.layers.0.mlp.experts.63.down_proj.weight | (2048, 768)

model.layers.0.mlp.experts.64.gate_proj.weight | (768, 2048)

model.layers.0.mlp.experts.64.up_proj.weight | (768, 2048)

model.layers.0.mlp.experts.64.down_proj.weight | (2048, 768)

model.layers.0.mlp.experts.65.gate_proj.weight | (768, 2048)

model.layers.0.mlp.experts.65.up_proj.weight | (768, 2048)

model.layers.0.mlp.experts.65.down_proj.weight | (2048, 768)

model.layers.0.mlp.experts.66.gate_proj.weight | (768, 2048)

model.layers.0.mlp.experts.66.up_proj.weight | (768, 2048)

model.layers.0.mlp.experts.66.down_proj.weight | (2048, 768)

model.layers.0.mlp.experts.67.gate_proj.weight | (768, 2048)

model.layers.0.mlp.experts.67.up_proj.weight | (768, 2048)

model.layers.0.mlp.experts.67.down_proj.weight | (2048, 768)

model.layers.0.mlp.experts.68.gate_proj.weight | (768, 2048)

model.layers.0.mlp.experts.68.up_proj.weight | (768, 2048)

model.layers.0.mlp.experts.68.down_proj.weight | (2048, 768)

model.layers.0.mlp.experts.69.gate_proj.weight | (768, 2048)

model.layers.0.mlp.experts.69.up_proj.weight | (768, 2048)

model.layers.0.mlp.experts.69.down_proj.weight | (2048, 768)

model.layers.0.mlp.experts.70.gate_proj.weight | (768, 2048)

model.layers.0.mlp.experts.70.up_proj.weight | (768, 2048)

model.layers.0.mlp.experts.70.down_proj.weight | (2048, 768)

model.layers.0.mlp.experts.71.gate_proj.weight | (768, 2048)

model.layers.0.mlp.experts.71.up_proj.weight | (768, 2048)

model.layers.0.mlp.experts.71.down_proj.weight | (2048, 768)

model.layers.0.mlp.experts.72.gate_proj.weight | (768, 2048)

model.layers.0.mlp.experts.72.up_proj.weight | (768, 2048)

model.layers.0.mlp.experts.72.down_proj.weight | (2048, 768)

model.layers.0.mlp.experts.73.gate_proj.weight | (768, 2048)

model.layers.0.mlp.experts.73.up_proj.weight | (768, 2048)

model.layers.0.mlp.experts.73.down_proj.weight | (2048, 768)

model.layers.0.mlp.experts.74.gate_proj.weight | (768, 2048)

model.layers.0.mlp.experts.74.up_proj.weight | (768, 2048)

model.layers.0.mlp.experts.74.down_proj.weight | (2048, 768)

model.layers.0.mlp.experts.75.gate_proj.weight | (768, 2048)

model.layers.0.mlp.experts.75.up_proj.weight | (768, 2048)

model.layers.0.mlp.experts.75.down_proj.weight | (2048, 768)

model.layers.0.mlp.experts.76.gate_proj.weight | (768, 2048)

model.layers.0.mlp.experts.76.up_proj.weight | (768, 2048)

model.layers.0.mlp.experts.76.down_proj.weight | (2048, 768)

model.layers.0.mlp.experts.77.gate_proj.weight | (768, 2048)

model.layers.0.mlp.experts.77.up_proj.weight | (768, 2048)

model.layers.0.mlp.experts.77.down_proj.weight | (2048, 768)

model.layers.0.mlp.experts.78.gate_proj.weight | (768, 2048)

model.layers.0.mlp.experts.78.up_proj.weight | (768, 2048)

model.layers.0.mlp.experts.78.down_proj.weight | (2048, 768)

model.layers.0.mlp.experts.79.gate_proj.weight | (768, 2048)

model.layers.0.mlp.experts.79.up_proj.weight | (768, 2048)

model.layers.0.mlp.experts.79.down_proj.weight | (2048, 768)

model.layers.0.mlp.experts.80.gate_proj.weight | (768, 2048)

model.layers.0.mlp.experts.80.up_proj.weight | (768, 2048)

model.layers.0.mlp.experts.80.down_proj.weight | (2048, 768)

model.layers.0.mlp.experts.81.gate_proj.weight | (768, 2048)

model.layers.0.mlp.experts.81.up_proj.weight | (768, 2048)

model.layers.0.mlp.experts.81.down_proj.weight | (2048, 768)

model.layers.0.mlp.experts.82.gate_proj.weight | (768, 2048)

model.layers.0.mlp.experts.82.up_proj.weight | (768, 2048)

model.layers.0.mlp.experts.82.down_proj.weight | (2048, 768)

model.layers.0.mlp.experts.83.gate_proj.weight | (768, 2048)

model.layers.0.mlp.experts.83.up_proj.weight | (768, 2048)

model.layers.0.mlp.experts.83.down_proj.weight | (2048, 768)

model.layers.0.mlp.experts.84.gate_proj.weight | (768, 2048)

model.layers.0.mlp.experts.84.up_proj.weight | (768, 2048)

model.layers.0.mlp.experts.84.down_proj.weight | (2048, 768)

model.layers.0.mlp.experts.85.gate_proj.weight | (768, 2048)

model.layers.0.mlp.experts.85.up_proj.weight | (768, 2048)

model.layers.0.mlp.experts.85.down_proj.weight | (2048, 768)

model.layers.0.mlp.experts.86.gate_proj.weight | (768, 2048)

model.layers.0.mlp.experts.86.up_proj.weight | (768, 2048)

model.layers.0.mlp.experts.86.down_proj.weight | (2048, 768)

model.layers.0.mlp.experts.87.gate_proj.weight | (768, 2048)

model.layers.0.mlp.experts.87.up_proj.weight | (768, 2048)

model.layers.0.mlp.experts.87.down_proj.weight | (2048, 768)

model.layers.0.mlp.experts.88.gate_proj.weight | (768, 2048)

model.layers.0.mlp.experts.88.up_proj.weight | (768, 2048)

model.layers.0.mlp.experts.88.down_proj.weight | (2048, 768)

model.layers.0.mlp.experts.89.gate_proj.weight | (768, 2048)

model.layers.0.mlp.experts.89.up_proj.weight | (768, 2048)

model.layers.0.mlp.experts.89.down_proj.weight | (2048, 768)

model.layers.0.mlp.experts.90.gate_proj.weight | (768, 2048)

model.layers.0.mlp.experts.90.up_proj.weight | (768, 2048)

model.layers.0.mlp.experts.90.down_proj.weight | (2048, 768)

model.layers.0.mlp.experts.91.gate_proj.weight | (768, 2048)

model.layers.0.mlp.experts.91.up_proj.weight | (768, 2048)

model.layers.0.mlp.experts.91.down_proj.weight | (2048, 768)

model.layers.0.mlp.experts.92.gate_proj.weight | (768, 2048)

model.layers.0.mlp.experts.92.up_proj.weight | (768, 2048)

model.layers.0.mlp.experts.92.down_proj.weight | (2048, 768)

model.layers.0.mlp.experts.93.gate_proj.weight | (768, 2048)

model.layers.0.mlp.experts.93.up_proj.weight | (768, 2048)

model.layers.0.mlp.experts.93.down_proj.weight | (2048, 768)

model.layers.0.mlp.experts.94.gate_proj.weight | (768, 2048)

model.layers.0.mlp.experts.94.up_proj.weight | (768, 2048)

model.layers.0.mlp.experts.94.down_proj.weight | (2048, 768)

model.layers.0.mlp.experts.95.gate_proj.weight | (768, 2048)

model.layers.0.mlp.experts.95.up_proj.weight | (768, 2048)

model.layers.0.mlp.experts.95.down_proj.weight | (2048, 768)

model.layers.0.mlp.experts.96.gate_proj.weight | (768, 2048)

model.layers.0.mlp.experts.96.up_proj.weight | (768, 2048)

model.layers.0.mlp.experts.96.down_proj.weight | (2048, 768)

model.layers.0.mlp.experts.97.gate_proj.weight | (768, 2048)

model.layers.0.mlp.experts.97.up_proj.weight | (768, 2048)

model.layers.0.mlp.experts.97.down_proj.weight | (2048, 768)

model.layers.0.mlp.experts.98.gate_proj.weight | (768, 2048)

model.layers.0.mlp.experts.98.up_proj.weight | (768, 2048)

model.layers.0.mlp.experts.98.down_proj.weight | (2048, 768)

model.layers.0.mlp.experts.99.gate_proj.weight | (768, 2048)

model.layers.0.mlp.experts.99.up_proj.weight | (768, 2048)

model.layers.0.mlp.experts.99.down_proj.weight | (2048, 768)

model.layers.0.mlp.experts.100.gate_proj.weight | (768, 2048)

model.layers.0.mlp.experts.100.up_proj.weight | (768, 2048)

model.layers.0.mlp.experts.100.down_proj.weight | (2048, 768)

model.layers.0.mlp.experts.101.gate_proj.weight | (768, 2048)

model.layers.0.mlp.experts.101.up_proj.weight | (768, 2048)

model.layers.0.mlp.experts.101.down_proj.weight | (2048, 768)

model.layers.0.mlp.experts.102.gate_proj.weight | (768, 2048)

model.layers.0.mlp.experts.102.up_proj.weight | (768, 2048)

model.layers.0.mlp.experts.102.down_proj.weight | (2048, 768)

model.layers.0.mlp.experts.103.gate_proj.weight | (768, 2048)

model.layers.0.mlp.experts.103.up_proj.weight | (768, 2048)

model.layers.0.mlp.experts.103.down_proj.weight | (2048, 768)

model.layers.0.mlp.experts.104.gate_proj.weight | (768, 2048)

model.layers.0.mlp.experts.104.up_proj.weight | (768, 2048)

model.layers.0.mlp.experts.104.down_proj.weight | (2048, 768)

model.layers.0.mlp.experts.105.gate_proj.weight | (768, 2048)

model.layers.0.mlp.experts.105.up_proj.weight | (768, 2048)

model.layers.0.mlp.experts.105.down_proj.weight | (2048, 768)

model.layers.0.mlp.experts.106.gate_proj.weight | (768, 2048)

model.layers.0.mlp.experts.106.up_proj.weight | (768, 2048)

model.layers.0.mlp.experts.106.down_proj.weight | (2048, 768)

model.layers.0.mlp.experts.107.gate_proj.weight | (768, 2048)

model.layers.0.mlp.experts.107.up_proj.weight | (768, 2048)

model.layers.0.mlp.experts.107.down_proj.weight | (2048, 768)

model.layers.0.mlp.experts.108.gate_proj.weight | (768, 2048)

model.layers.0.mlp.experts.108.up_proj.weight | (768, 2048)

model.layers.0.mlp.experts.108.down_proj.weight | (2048, 768)

model.layers.0.mlp.experts.109.gate_proj.weight | (768, 2048)

model.layers.0.mlp.experts.109.up_proj.weight | (768, 2048)

model.layers.0.mlp.experts.109.down_proj.weight | (2048, 768)

model.layers.0.mlp.experts.110.gate_proj.weight | (768, 2048)

model.layers.0.mlp.experts.110.up_proj.weight | (768, 2048)

model.layers.0.mlp.experts.110.down_proj.weight | (2048, 768)

model.layers.0.mlp.experts.111.gate_proj.weight | (768, 2048)

model.layers.0.mlp.experts.111.up_proj.weight | (768, 2048)

model.layers.0.mlp.experts.111.down_proj.weight | (2048, 768)

model.layers.0.mlp.experts.112.gate_proj.weight | (768, 2048)

model.layers.0.mlp.experts.112.up_proj.weight | (768, 2048)

model.layers.0.mlp.experts.112.down_proj.weight | (2048, 768)

model.layers.0.mlp.experts.113.gate_proj.weight | (768, 2048)

model.layers.0.mlp.experts.113.up_proj.weight | (768, 2048)

model.layers.0.mlp.experts.113.down_proj.weight | (2048, 768)

model.layers.0.mlp.experts.114.gate_proj.weight | (768, 2048)

model.layers.0.mlp.experts.114.up_proj.weight | (768, 2048)

model.layers.0.mlp.experts.114.down_proj.weight | (2048, 768)

model.layers.0.mlp.experts.115.gate_proj.weight | (768, 2048)

model.layers.0.mlp.experts.115.up_proj.weight | (768, 2048)

model.layers.0.mlp.experts.115.down_proj.weight | (2048, 768)

model.layers.0.mlp.experts.116.gate_proj.weight | (768, 2048)

model.layers.0.mlp.experts.116.up_proj.weight | (768, 2048)

model.layers.0.mlp.experts.116.down_proj.weight | (2048, 768)

model.layers.0.mlp.experts.117.gate_proj.weight | (768, 2048)

model.layers.0.mlp.experts.117.up_proj.weight | (768, 2048)

model.layers.0.mlp.experts.117.down_proj.weight | (2048, 768)

model.layers.0.mlp.experts.118.gate_proj.weight | (768, 2048)

model.layers.0.mlp.experts.118.up_proj.weight | (768, 2048)

model.layers.0.mlp.experts.118.down_proj.weight | (2048, 768)

model.layers.0.mlp.experts.119.gate_proj.weight | (768, 2048)

model.layers.0.mlp.experts.119.up_proj.weight | (768, 2048)

model.layers.0.mlp.experts.119.down_proj.weight | (2048, 768)

model.layers.0.mlp.experts.120.gate_proj.weight | (768, 2048)

model.layers.0.mlp.experts.120.up_proj.weight | (768, 2048)

model.layers.0.mlp.experts.120.down_proj.weight | (2048, 768)

model.layers.0.mlp.experts.121.gate_proj.weight | (768, 2048)

model.layers.0.mlp.experts.121.up_proj.weight | (768, 2048)

model.layers.0.mlp.experts.121.down_proj.weight | (2048, 768)

model.layers.0.mlp.experts.122.gate_proj.weight | (768, 2048)

model.layers.0.mlp.experts.122.up_proj.weight | (768, 2048)

model.layers.0.mlp.experts.122.down_proj.weight | (2048, 768)

model.layers.0.mlp.experts.123.gate_proj.weight | (768, 2048)

model.layers.0.mlp.experts.123.up_proj.weight | (768, 2048)

model.layers.0.mlp.experts.123.down_proj.weight | (2048, 768)

model.layers.0.mlp.experts.124.gate_proj.weight | (768, 2048)

model.layers.0.mlp.experts.124.up_proj.weight | (768, 2048)

model.layers.0.mlp.experts.124.down_proj.weight | (2048, 768)

model.layers.0.mlp.experts.125.gate_proj.weight | (768, 2048)

model.layers.0.mlp.experts.125.up_proj.weight | (768, 2048)

model.layers.0.mlp.experts.125.down_proj.weight | (2048, 768)

model.layers.0.mlp.experts.126.gate_proj.weight | (768, 2048)

model.layers.0.mlp.experts.126.up_proj.weight | (768, 2048)

model.layers.0.mlp.experts.126.down_proj.weight | (2048, 768)

model.layers.0.mlp.experts.127.gate_proj.weight | (768, 2048)

model.layers.0.mlp.experts.127.up_proj.weight | (768, 2048)

model.layers.0.mlp.experts.127.down_proj.weight | (2048, 768)

model.layers.0.input_layernorm.weight | (2048,)

model.layers.0.post_attention_layernorm.weight | (2048,)DeepSeek

以 V2/V3 为代表,主打 MLA + DeepSeekMoE

以及 RoPE / RMSNorm 等 Transformer 细节

一句话定位:DeepSeek 更像“为大规模 MoE + 长上下文推理效率深度定制”的架构路线,核心卖点是 MLA 极致省 KV + 大 MoE 稀疏计算

GLM

带shared experts和bias

Shared experts相比于纯稀疏MOE网络来说会更加的稳定,但是带来了必要开销,可能带有experts参数浪费

GLM-4.5-Air

前两层结构

==== Parameter Shapes ====

model.embed_tokens.weight | (151552, 4096)

model.layers.0.self_attn.q_proj.weight | (12288, 4096)

model.layers.0.self_attn.q_proj.bias | (12288,)

model.layers.0.self_attn.k_proj.weight | (1024, 4096)

model.layers.0.self_attn.k_proj.bias | (1024,)

model.layers.0.self_attn.v_proj.weight | (1024, 4096)

model.layers.0.self_attn.v_proj.bias | (1024,)

model.layers.0.self_attn.o_proj.weight | (4096, 12288)

model.layers.0.mlp.gate_proj.weight | (10944, 4096)

model.layers.0.mlp.up_proj.weight | (10944, 4096)

model.layers.0.mlp.down_proj.weight | (4096, 10944)

model.layers.0.input_layernorm.weight | (4096,)

model.layers.0.post_attention_layernorm.weight | (4096,)

model.layers.1.self_attn.q_proj.weight | (12288, 4096)

model.layers.1.self_attn.q_proj.bias | (12288,)

model.layers.1.self_attn.k_proj.weight | (1024, 4096)

model.layers.1.self_attn.k_proj.bias | (1024,)

model.layers.1.self_attn.v_proj.weight | (1024, 4096)

model.layers.1.self_attn.v_proj.bias | (1024,)

model.layers.1.self_attn.o_proj.weight | (4096, 12288)

model.layers.1.mlp.experts.0.gate_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.0.up_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.0.down_proj.weight | (4096, 1408)

model.layers.1.mlp.experts.1.gate_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.1.up_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.1.down_proj.weight | (4096, 1408)

model.layers.1.mlp.experts.2.gate_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.2.up_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.2.down_proj.weight | (4096, 1408)

model.layers.1.mlp.experts.3.gate_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.3.up_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.3.down_proj.weight | (4096, 1408)

model.layers.1.mlp.experts.4.gate_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.4.up_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.4.down_proj.weight | (4096, 1408)

model.layers.1.mlp.experts.5.gate_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.5.up_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.5.down_proj.weight | (4096, 1408)

model.layers.1.mlp.experts.6.gate_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.6.up_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.6.down_proj.weight | (4096, 1408)

model.layers.1.mlp.experts.7.gate_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.7.up_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.7.down_proj.weight | (4096, 1408)

model.layers.1.mlp.experts.8.gate_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.8.up_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.8.down_proj.weight | (4096, 1408)

model.layers.1.mlp.experts.9.gate_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.9.up_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.9.down_proj.weight | (4096, 1408)

model.layers.1.mlp.experts.10.gate_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.10.up_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.10.down_proj.weight | (4096, 1408)

model.layers.1.mlp.experts.11.gate_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.11.up_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.11.down_proj.weight | (4096, 1408)

model.layers.1.mlp.experts.12.gate_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.12.up_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.12.down_proj.weight | (4096, 1408)

model.layers.1.mlp.experts.13.gate_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.13.up_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.13.down_proj.weight | (4096, 1408)

model.layers.1.mlp.experts.14.gate_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.14.up_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.14.down_proj.weight | (4096, 1408)

model.layers.1.mlp.experts.15.gate_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.15.up_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.15.down_proj.weight | (4096, 1408)

model.layers.1.mlp.experts.16.gate_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.16.up_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.16.down_proj.weight | (4096, 1408)

model.layers.1.mlp.experts.17.gate_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.17.up_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.17.down_proj.weight | (4096, 1408)

model.layers.1.mlp.experts.18.gate_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.18.up_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.18.down_proj.weight | (4096, 1408)

model.layers.1.mlp.experts.19.gate_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.19.up_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.19.down_proj.weight | (4096, 1408)

model.layers.1.mlp.experts.20.gate_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.20.up_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.20.down_proj.weight | (4096, 1408)

model.layers.1.mlp.experts.21.gate_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.21.up_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.21.down_proj.weight | (4096, 1408)

model.layers.1.mlp.experts.22.gate_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.22.up_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.22.down_proj.weight | (4096, 1408)

model.layers.1.mlp.experts.23.gate_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.23.up_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.23.down_proj.weight | (4096, 1408)

model.layers.1.mlp.experts.24.gate_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.24.up_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.24.down_proj.weight | (4096, 1408)

model.layers.1.mlp.experts.25.gate_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.25.up_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.25.down_proj.weight | (4096, 1408)

model.layers.1.mlp.experts.26.gate_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.26.up_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.26.down_proj.weight | (4096, 1408)

model.layers.1.mlp.experts.27.gate_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.27.up_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.27.down_proj.weight | (4096, 1408)

model.layers.1.mlp.experts.28.gate_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.28.up_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.28.down_proj.weight | (4096, 1408)

model.layers.1.mlp.experts.29.gate_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.29.up_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.29.down_proj.weight | (4096, 1408)

model.layers.1.mlp.experts.30.gate_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.30.up_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.30.down_proj.weight | (4096, 1408)

model.layers.1.mlp.experts.31.gate_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.31.up_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.31.down_proj.weight | (4096, 1408)

model.layers.1.mlp.experts.32.gate_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.32.up_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.32.down_proj.weight | (4096, 1408)

model.layers.1.mlp.experts.33.gate_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.33.up_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.33.down_proj.weight | (4096, 1408)

model.layers.1.mlp.experts.34.gate_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.34.up_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.34.down_proj.weight | (4096, 1408)

model.layers.1.mlp.experts.35.gate_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.35.up_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.35.down_proj.weight | (4096, 1408)

model.layers.1.mlp.experts.36.gate_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.36.up_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.36.down_proj.weight | (4096, 1408)

model.layers.1.mlp.experts.37.gate_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.37.up_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.37.down_proj.weight | (4096, 1408)

model.layers.1.mlp.experts.38.gate_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.38.up_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.38.down_proj.weight | (4096, 1408)

model.layers.1.mlp.experts.39.gate_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.39.up_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.39.down_proj.weight | (4096, 1408)

model.layers.1.mlp.experts.40.gate_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.40.up_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.40.down_proj.weight | (4096, 1408)

model.layers.1.mlp.experts.41.gate_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.41.up_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.41.down_proj.weight | (4096, 1408)

model.layers.1.mlp.experts.42.gate_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.42.up_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.42.down_proj.weight | (4096, 1408)

model.layers.1.mlp.experts.43.gate_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.43.up_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.43.down_proj.weight | (4096, 1408)

model.layers.1.mlp.experts.44.gate_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.44.up_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.44.down_proj.weight | (4096, 1408)

model.layers.1.mlp.experts.45.gate_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.45.up_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.45.down_proj.weight | (4096, 1408)

model.layers.1.mlp.experts.46.gate_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.46.up_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.46.down_proj.weight | (4096, 1408)

model.layers.1.mlp.experts.47.gate_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.47.up_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.47.down_proj.weight | (4096, 1408)

model.layers.1.mlp.experts.48.gate_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.48.up_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.48.down_proj.weight | (4096, 1408)

model.layers.1.mlp.experts.49.gate_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.49.up_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.49.down_proj.weight | (4096, 1408)

model.layers.1.mlp.experts.50.gate_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.50.up_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.50.down_proj.weight | (4096, 1408)

model.layers.1.mlp.experts.51.gate_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.51.up_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.51.down_proj.weight | (4096, 1408)

model.layers.1.mlp.experts.52.gate_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.52.up_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.52.down_proj.weight | (4096, 1408)

model.layers.1.mlp.experts.53.gate_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.53.up_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.53.down_proj.weight | (4096, 1408)

model.layers.1.mlp.experts.54.gate_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.54.up_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.54.down_proj.weight | (4096, 1408)

model.layers.1.mlp.experts.55.gate_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.55.up_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.55.down_proj.weight | (4096, 1408)

model.layers.1.mlp.experts.56.gate_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.56.up_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.56.down_proj.weight | (4096, 1408)

model.layers.1.mlp.experts.57.gate_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.57.up_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.57.down_proj.weight | (4096, 1408)

model.layers.1.mlp.experts.58.gate_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.58.up_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.58.down_proj.weight | (4096, 1408)

model.layers.1.mlp.experts.59.gate_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.59.up_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.59.down_proj.weight | (4096, 1408)

model.layers.1.mlp.experts.60.gate_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.60.up_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.60.down_proj.weight | (4096, 1408)

model.layers.1.mlp.experts.61.gate_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.61.up_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.61.down_proj.weight | (4096, 1408)

model.layers.1.mlp.experts.62.gate_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.62.up_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.62.down_proj.weight | (4096, 1408)

model.layers.1.mlp.experts.63.gate_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.63.up_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.63.down_proj.weight | (4096, 1408)

model.layers.1.mlp.experts.64.gate_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.64.up_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.64.down_proj.weight | (4096, 1408)

model.layers.1.mlp.experts.65.gate_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.65.up_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.65.down_proj.weight | (4096, 1408)

model.layers.1.mlp.experts.66.gate_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.66.up_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.66.down_proj.weight | (4096, 1408)

model.layers.1.mlp.experts.67.gate_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.67.up_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.67.down_proj.weight | (4096, 1408)

model.layers.1.mlp.experts.68.gate_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.68.up_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.68.down_proj.weight | (4096, 1408)

model.layers.1.mlp.experts.69.gate_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.69.up_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.69.down_proj.weight | (4096, 1408)

model.layers.1.mlp.experts.70.gate_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.70.up_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.70.down_proj.weight | (4096, 1408)

model.layers.1.mlp.experts.71.gate_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.71.up_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.71.down_proj.weight | (4096, 1408)

model.layers.1.mlp.experts.72.gate_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.72.up_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.72.down_proj.weight | (4096, 1408)

model.layers.1.mlp.experts.73.gate_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.73.up_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.73.down_proj.weight | (4096, 1408)

model.layers.1.mlp.experts.74.gate_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.74.up_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.74.down_proj.weight | (4096, 1408)

model.layers.1.mlp.experts.75.gate_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.75.up_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.75.down_proj.weight | (4096, 1408)

model.layers.1.mlp.experts.76.gate_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.76.up_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.76.down_proj.weight | (4096, 1408)

model.layers.1.mlp.experts.77.gate_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.77.up_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.77.down_proj.weight | (4096, 1408)

model.layers.1.mlp.experts.78.gate_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.78.up_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.78.down_proj.weight | (4096, 1408)

model.layers.1.mlp.experts.79.gate_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.79.up_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.79.down_proj.weight | (4096, 1408)

model.layers.1.mlp.experts.80.gate_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.80.up_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.80.down_proj.weight | (4096, 1408)

model.layers.1.mlp.experts.81.gate_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.81.up_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.81.down_proj.weight | (4096, 1408)

model.layers.1.mlp.experts.82.gate_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.82.up_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.82.down_proj.weight | (4096, 1408)

model.layers.1.mlp.experts.83.gate_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.83.up_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.83.down_proj.weight | (4096, 1408)

model.layers.1.mlp.experts.84.gate_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.84.up_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.84.down_proj.weight | (4096, 1408)

model.layers.1.mlp.experts.85.gate_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.85.up_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.85.down_proj.weight | (4096, 1408)

model.layers.1.mlp.experts.86.gate_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.86.up_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.86.down_proj.weight | (4096, 1408)

model.layers.1.mlp.experts.87.gate_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.87.up_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.87.down_proj.weight | (4096, 1408)

model.layers.1.mlp.experts.88.gate_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.88.up_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.88.down_proj.weight | (4096, 1408)

model.layers.1.mlp.experts.89.gate_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.89.up_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.89.down_proj.weight | (4096, 1408)

model.layers.1.mlp.experts.90.gate_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.90.up_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.90.down_proj.weight | (4096, 1408)

model.layers.1.mlp.experts.91.gate_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.91.up_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.91.down_proj.weight | (4096, 1408)

model.layers.1.mlp.experts.92.gate_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.92.up_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.92.down_proj.weight | (4096, 1408)

model.layers.1.mlp.experts.93.gate_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.93.up_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.93.down_proj.weight | (4096, 1408)

model.layers.1.mlp.experts.94.gate_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.94.up_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.94.down_proj.weight | (4096, 1408)

model.layers.1.mlp.experts.95.gate_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.95.up_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.95.down_proj.weight | (4096, 1408)

model.layers.1.mlp.experts.96.gate_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.96.up_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.96.down_proj.weight | (4096, 1408)

model.layers.1.mlp.experts.97.gate_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.97.up_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.97.down_proj.weight | (4096, 1408)

model.layers.1.mlp.experts.98.gate_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.98.up_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.98.down_proj.weight | (4096, 1408)

model.layers.1.mlp.experts.99.gate_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.99.up_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.99.down_proj.weight | (4096, 1408)

model.layers.1.mlp.experts.100.gate_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.100.up_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.100.down_proj.weight | (4096, 1408)

model.layers.1.mlp.experts.101.gate_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.101.up_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.101.down_proj.weight | (4096, 1408)

model.layers.1.mlp.experts.102.gate_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.102.up_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.102.down_proj.weight | (4096, 1408)

model.layers.1.mlp.experts.103.gate_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.103.up_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.103.down_proj.weight | (4096, 1408)

model.layers.1.mlp.experts.104.gate_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.104.up_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.104.down_proj.weight | (4096, 1408)

model.layers.1.mlp.experts.105.gate_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.105.up_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.105.down_proj.weight | (4096, 1408)

model.layers.1.mlp.experts.106.gate_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.106.up_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.106.down_proj.weight | (4096, 1408)

model.layers.1.mlp.experts.107.gate_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.107.up_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.107.down_proj.weight | (4096, 1408)

model.layers.1.mlp.experts.108.gate_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.108.up_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.108.down_proj.weight | (4096, 1408)

model.layers.1.mlp.experts.109.gate_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.109.up_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.109.down_proj.weight | (4096, 1408)

model.layers.1.mlp.experts.110.gate_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.110.up_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.110.down_proj.weight | (4096, 1408)

model.layers.1.mlp.experts.111.gate_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.111.up_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.111.down_proj.weight | (4096, 1408)

model.layers.1.mlp.experts.112.gate_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.112.up_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.112.down_proj.weight | (4096, 1408)

model.layers.1.mlp.experts.113.gate_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.113.up_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.113.down_proj.weight | (4096, 1408)

model.layers.1.mlp.experts.114.gate_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.114.up_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.114.down_proj.weight | (4096, 1408)

model.layers.1.mlp.experts.115.gate_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.115.up_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.115.down_proj.weight | (4096, 1408)

model.layers.1.mlp.experts.116.gate_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.116.up_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.116.down_proj.weight | (4096, 1408)

model.layers.1.mlp.experts.117.gate_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.117.up_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.117.down_proj.weight | (4096, 1408)

model.layers.1.mlp.experts.118.gate_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.118.up_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.118.down_proj.weight | (4096, 1408)

model.layers.1.mlp.experts.119.gate_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.119.up_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.119.down_proj.weight | (4096, 1408)

model.layers.1.mlp.experts.120.gate_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.120.up_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.120.down_proj.weight | (4096, 1408)

model.layers.1.mlp.experts.121.gate_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.121.up_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.121.down_proj.weight | (4096, 1408)

model.layers.1.mlp.experts.122.gate_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.122.up_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.122.down_proj.weight | (4096, 1408)

model.layers.1.mlp.experts.123.gate_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.123.up_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.123.down_proj.weight | (4096, 1408)

model.layers.1.mlp.experts.124.gate_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.124.up_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.124.down_proj.weight | (4096, 1408)

model.layers.1.mlp.experts.125.gate_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.125.up_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.125.down_proj.weight | (4096, 1408)

model.layers.1.mlp.experts.126.gate_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.126.up_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.126.down_proj.weight | (4096, 1408)

model.layers.1.mlp.experts.127.gate_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.127.up_proj.weight | (1408, 4096)

model.layers.1.mlp.experts.127.down_proj.weight | (4096, 1408)

model.layers.1.mlp.gate.weight | (128, 4096)

model.layers.1.mlp.shared_experts.gate_proj.weight | (1408, 4096)

model.layers.1.mlp.shared_experts.up_proj.weight | (1408, 4096)

model.layers.1.mlp.shared_experts.down_proj.weight | (4096, 1408)

model.layers.1.input_layernorm.weight | (4096,)

model.layers.1.post_attention_layernorm.weight | (4096,)LLM 应用建设思路

DeepSeek